Red Piranha’s Crystal Eye UTM appliances are multi-core systems that enable multi-threaded applications to use the underlying hardware for high performance. Multi-threading scales the system by adding more threads for running different applications which inspect the incoming traffic before transmitting it to the protected network.

One such application running on Crystal Eye appliances for Intrusion Detection and Protection System (IDPS) is the Suricata engine. Suricata is a high performance, multi-threaded IDS, IPS and Network Monitoring engine that can handle gigabits of traffic without any losses. Since failure to inspect a single packet can lead to unwanted intrusions to a network, Crystal Eye uses Suricata to implement high-speed, lossless and highly secure networks.

In our efforts to tune the Crystal Eye appliances to give best performance in high speed networks, Red Piranha has successfully achieved 60Gbps Suricata throughput in the lab on a single commodity hardware 2U unit.

Test Configuration

Tests were performed on a series 80 dual-socket, dual Xeon E5-2697v4 CPU (HT enabled, total 72 cores), 128Gb RAM running Ubuntu 18.04.2 LTS. 2 x dual port Intel XL-710 40GbE cards were used for receiving the traffic. Traffic was replayed by TRex traffic generator running on a similar setup. For achieving 60Gbps traffic, a total of 62Gbps were generated by TRex that were handled without loss by a single Suricata instance running in IDS mode. Suricata was instantiated with a 14312 signatures Emerging Threats ruleset.

Traffic Details

We harnessed TRex’s capability of generating stateful traffic using profiles that closely simulated an enterprise network. It had a mix of HTTPs/HTTP browsing data (76%), real time applications like VoIP, Video captures (12%) in addition to other enterprise traffic replays (12%). Traffic was mostly small realistic flows instead of elephant flows.

Key Configurations

The test setup was tuned for high performance at the system and application (Suricata) level. Some of the key configurations and tuning performed on the setup were

- Maintain NUMA locality to the CPU cores.

- Maximize L3 cache hits to handle high traffic rates

- Enable receive side hashing of the traffic to distribute traffic evenly to multiple Suricata worker threads.

- Pin CPU cores to Suricata worker threads and isolate these threads from other user processes.

- Run all other housekeeping tasks on the remaining cores.

Performance Improvements

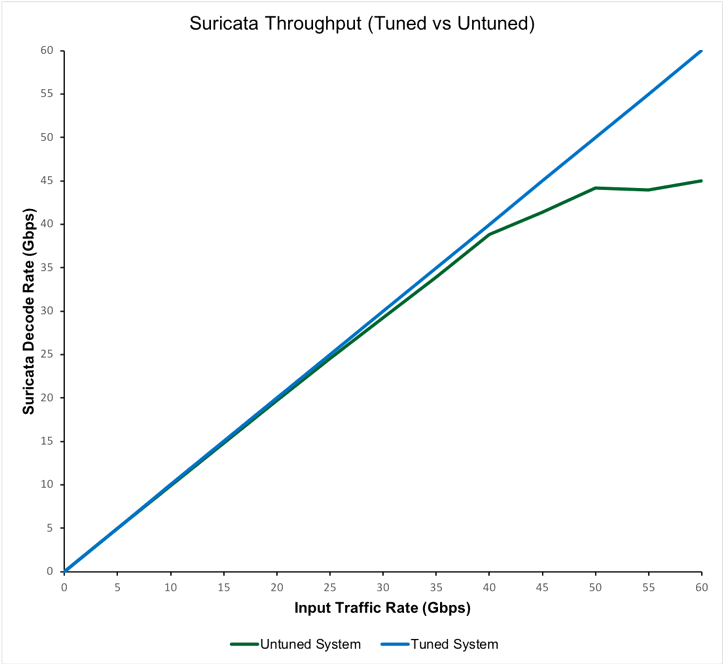

Traffic was tested on a normal setup and a tuned setup. The graph below depicts the Suricata throughput for both the tests.

The untuned system in our lab did not have NIC tuning and was running default Suricata configs with only memcap modifications for handling high speeds. It was observed that Suricata was not able to handle packets at wire speed and encountered drops on such a system. A tuned system was able to receive the Suricata throughput to 60Gbps as observed in our setup.

Future Work

The setup used in these tests is the current configuration of Red Piranha’s Crystal Eye Series-80 UTM appliances. These appliances are ideal for high-end security solutions for telecoms or large IT needs. Similar tuning will be performed with the Crystal Eye firmware for different appliances to achieve the best performance for different traffic rates.

The full link to tuning information and results can be found here